Key Takeaways:

- Nvidia released Nemotron 3 Super, a 120B-parameter open MoE model activating only 12.7B parameters per forward pass.

- Nemotron 3 Super delivers up to 7.5x more throughput than Qwen3.5-122B-A10B in agent workloads on 8k-in/64k-out settings.

- The model is fully open under the Nvidia Nemotron Open Model License, with checkpoints and training data on Hugging Face.

Nvidia Launches Nemotron 3 Super With 7.5x Throughput Gains Over Qwen3.5-122B

The latest Nvidia model activates only 12.7 billion parameters per forward pass using a Mixture-of-Experts (MoE) architecture, meaning most of its weight stays idle during inference. That design choice directly targets two problems developers hit when deploying multi-step AI agents: the added cost of extended reasoning chains and the ballooning token usage that can multiply up to 15 times in multi-agent pipelines.

Nemotron 3 Super is the second model in Nvidia’s Nemotron 3 family, following Nemotron 3 Nano from December 2025. Nvidia announced the release around March 10, 2026.

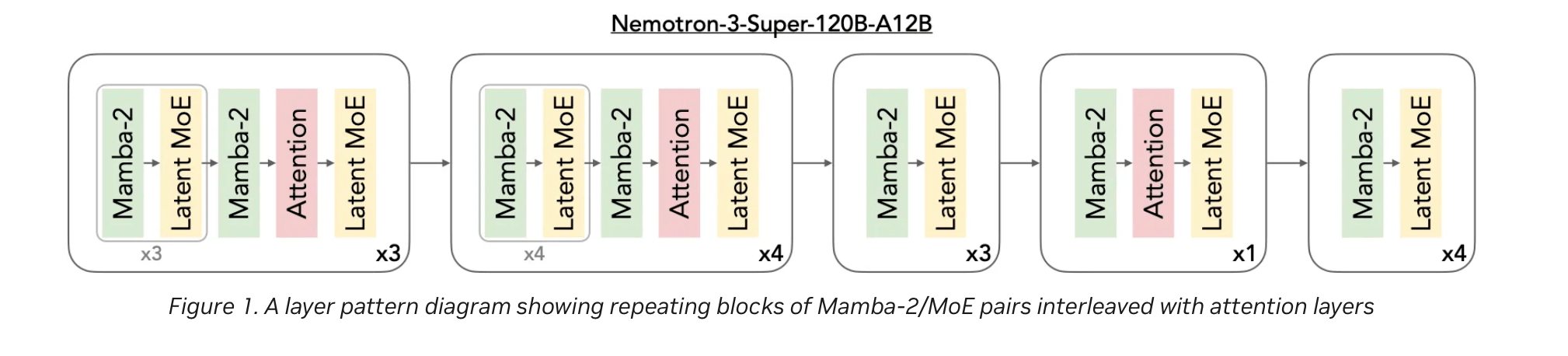

The model uses a hybrid Mamba-Transformer backbone across 88 layers. Mamba-2 blocks handle long sequences with linear-time efficiency, while Transformer attention layers preserve precise recall. That combination gives the model native support for context windows up to one million tokens without the memory penalties typical of pure-attention designs.

Nvidia also built in a LatentMoE routing system that compresses token embeddings into a low-rank space before sending them to 512 experts per layer, activating 22 at a time. The company says this allows roughly four times more experts at the same inference cost compared to standard MoE approaches, and enables finer task specialization, such as separating Python logic from SQL handling at the expert level.

Multi-Token Prediction layers, using two shared-weight heads, speed up chain-of-thought generation and allow native speculative decoding. On structured tasks, Nvidia reports up to three times faster generation.

The model was pre-trained on 25 trillion tokens across two phases. The first phase used 20 trillion tokens of broad data. The second used five trillion high-quality tokens tuned for benchmark performance. A final extension phase on 51 billion tokens extended native context to one million tokens. Post-training included supervised fine-tuning on roughly seven million samples and reinforcement learning across 21 environments with more than 1.2 million rollouts.

In benchmarks, Nemotron 3 Super scored 83.73 on MMLU-Pro, 90.21 on AIME25, and 60.47 on SWE-Bench using OpenHands. On PinchBench, it reached 85.6 percent, the highest reported score among open models in its class. On long-context evaluation, it scored 91.64 on RULER 1M.

Compared to GPT-OSS-120B, Nemotron 3 Super delivers 2.2 times the throughput at 8k input and 64k output. Against Qwen3.5-122B-A10B, that figure reaches 7.5 times. Nvidia also reports more than five times the throughput and up to two times the accuracy over the prior Nemotron Super generation.

Nvidia trained the model end-to-end in its NVFP4 four-bit floating-point format, optimized for Blackwell GPUs. On B200 hardware, Nvidia says inference runs up to four times faster compared to FP8 on H100 with no reported accuracy loss. Quantized FP8 and NVFP4 checkpoints retain 99.8 percent or more of full-precision accuracy.

The model also powers the Nvidia AI-Q research agent, which reached the top position on the Deepresearch Bench leaderboard.

Nemotron 3 Super is fully open under the Nvidia Nemotron Open Model License. Checkpoints in BF16, FP8, and NVFP4 formats, along with pre-training data, post-training samples, and reinforcement learning environments, are available on Hugging Face. Inference is supported through Nvidia NIM, build.nvidia.com, Perplexity, Openrouter, Together AI, Google Cloud, AWS, Azure, and Coreweave, with on-premises options via Dell Enterprise Hub and HPE.

Developers can access training recipes, fine-tuning guides, and inference cookbooks through the NeMo platform using vLLM, SGLang, and TensorRT-LLM.